Is “Pixel Blitting” in AS3 really worth the effort?

While planning some new routines for the PixelBlitz engine tonight one thing struck me – is it actually worth it?

There are a number of articles across the web about pixel blitting in AS3 (most of them at 8-bit Rocket 🙂 but I did wonder if anyone had actually done some tests to see just what difference it makes in real-world terms.

After all, why mess around “blitting” things about if using a Sprite or MovieClip is just as fast anyway? Infact you could easily argue that using a native Flash display object gives you far more control (as you get to play with scaling, alpha, rotation, animation, sound events and more, easily).

Another thing also struck me – when building up the display for render the AVM will automatically use a dirty rectangles system. If you’ve got two overlapping movieclips then it won’t waste time drawing pixels that would otherwise be obscured by the one in front. Traditional blitting on the other hand doesn’t care about this, it’ll gleefully copyPixel() until the cows come home, pasting image after image on-top of each other (PixelBlitz suffers from this issue too).

[ Side note: It’s true we could add a similar dirty rectangles system to Pixel Blitz, to avoid copying data when it’s guaranteed to be overwritten further up the chain – but this is not something we’ve found a fast way to do yet (the potential alpha channel of a bitmap causing the most problems), the overhead of sorting and checking for overlaps is always taking longer than just brute-force copying everything each time (if you can help, email me!) ]

Tonight I decided to write two simple tests. They would measure the speed of the AVMs dirty rectangle system vs. raw bitmapdata copypixel power. I was interested in 3 things – the overall time it took to run the test, the amount of memory it used and the average fps rate.

The Tests

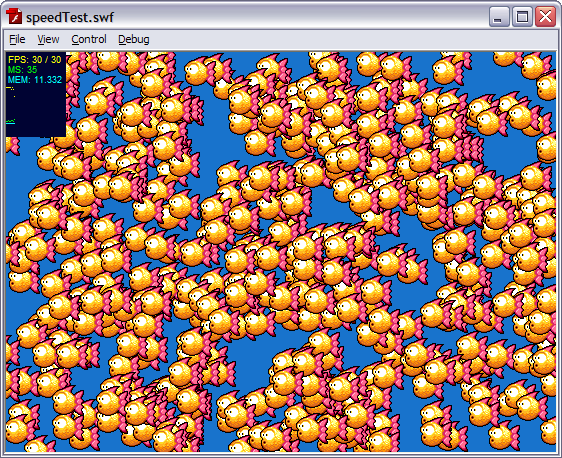

I took a 550 x 400 sized stage published at 30 fps. All tests were run using the Debug version of the Player (9.0 r 124). The test consisted of creating an array of X number of sprites (to test the AVM) and PixelSprites (to test blitting). Each sprite was 50×50 in size and contained an alpha channel. I then drew all of the sprites onto the stage and moved them along by 4 pixels per frame, if they hit the left of the stage they wrapped around to the right again. The Sprites had cacheAsBitmap set to true (see note below)

Then I ran the tests multiple times, with varying numbers of fish, for varying durations, recording the data at each step and averaging it out.

I agree that this is in no way a truly “scientific” test, but I wanted a general “feeling” as a result, to see if this was an avenue still worth walking down or not.

The Results

With 500 sprites both the standard Sprite and the blit method kept a solid 30 fps frame rate. Using Sprites consumed 15MB of RAM, using blits 11MB.

At 1000 sprites we’re still at a consistent 30 fps, but there is noticeable “tearing” in the visuals as the sprites move across the stage. It’s not terrible, but you can easily see it. The standard method is now using 20MB while the blit is using 14MB.

2500 sprites and we see both techniques struggle to keep-up with the 30 fps rate. The traditional Sprites actually outpace the blitting at 23 fps vs 21 fps, but the memory consumption is more than doubled, 35MB vs. 15MB.

At 5000 sprites they are both starting to feel the strain, each level pegging at 12 fps. But the standard Sprites technique is using a staggering 58MB, while the blit is only up to 20MB.

7,500 sprites all moving at once and both techiques are virtually bought to their knees managing just 8 fps each. Given the amount of data moving this isn’t totally surprising. The blit technique at this point is literally copying 18.7 million pixels around in memory. The AVMs internal dirty rectangle is feeling the full force of what’s going on however, and is now consuming 237MB of RAM vs. the blit techniques 25MB.

10,000 sprites crashes the Debug player for both versions, it literally runs out of memory 🙂

cacheAsBitmap

As I mentioned at the start, the Sprite version had cacheAsBitmap set to true. This is the main cause of the huge amount of RAM being used. As our Sprite only contained a single Bitmap this wasn’t needed. By removing this setting the amount of RAM used dropped, ending up only a few MB higher than the straight blit method.

Our Findings

So what can we pull from this?

First of all, the AVM dirty rectangles implementation is pretty damn sweet! But brute-force blitting is equally as fast in this test case. Logic tells us that adding redraw aware optimisation to our blit engine should increase this gap in our favour significantly.

NEVER enable cacheAsBitmap on a Sprite or MovieClip if all it contains is bitmap data.

The blit engine uses less memory. If you need to cache vector Sprites in your game, then it uses considerably less memory!

No-one really needs a game with 7,500 fish swimming around in it 😉

Maybe the test wasn’t “real world” enough – even at the 1000 sprite level (at which both methods kept a 30 fps frame rate) we were still moving 2.5 million pixels around a 550 x 400 stage. That’s enough to fill the stage 11 times over (and still have some spare). Is this likely in a real game? Well no, I don’t believe so – but it isn’t that far off either. Games are getting bigger (we published one at 800×600 today for example), and if you had a game featuring multiple layers going on (foreground, player, background, distance, etc) with alpha showing through them all, then it doesn’t take long to start using pixels in the millions range.

There are instances when I believe it’s just easier to deal with things on a blit level – for example building up a large n-way scrolling tilemap, where you constantly need to redraw the scroll buffers. Doing the same by placing (and updating) hundreds of Sprites would be an exercise in pain I wouldn’t wish on anyone.

Is a combination of both worlds the way to go? Quite possibly. While I loathe using the timeline (or Movieclips in general) for anything, they do offer Flash animators a rich featured tool-set that let’s them create vibrant moving games. Whereas the blit method requires graphic artists trained in the way of the pixel, and I believe those are a dying (and expensive) breed indeed. Creating quality animations at that level is time-consuming and costly. But as we’ve seen, animating on a vector level introduces both resource and speed issues into your game.

What about collision detection? Well we all know this pretty much sucks in Flash. So we have to roll our own methods anyway. For pixel perfect collision detection we need to inspect the elements on a pixel level (surprise surprise), at least with the blit technique we’re already operating on that level, so there’s no extra draw() overhead involved.

Conclusion

Are AS3 Sprites “evil” for those of you trying to create arcade style games? No, I don’t believe so. They can hold their own in the speed stakes thanks to the power of the AVM, but you do have to watch yourself and be very careful re: memory consumption.

Is “blitting” really that much faster the using normal Sprites? No, it isn’t. It does have less memory overhead and a “cleaner” feel to it, but it’s no speed demon in comparison.

Would a hybrid solution work? (i.e. a fully blitted tilemap with Movieclips characters on-top) – yes, absolutely!

Don’t feel that because you have travelled down the “blit” route you need to have the whole game living there. If you can mix and match your game logic and most importantly your collision systems, then there’s no harm in splitting these elements up, using both at once.

P.S. If you’ve got some ideas or concepts on optimising blit level drawing, please get in touch. I’ve been reading a lot about this recently (what I can find at least) but it’s always good to pick someone’s brain.

Posted on September 19th 2008 at 1:12 am by Rich.

View more posts in ActionScript3. Follow responses via the RSS 2.0 feed.

Make yourself heard

Hire Us

All about Photon Storm and our

HTML5 game development services

Recent Posts

OurGames

Filter our Content

- ActionScript3

- Art

- Cool Links

- Demoscene

- Flash Game Dev Tips

- Game Development

- Gaming

- Geek Shopping

- HTML5

- In the Media

- Phaser

- Phaser 3

- Projects

Brain Food